Experimenting with AI Generated Videos for Language Learning

Intro

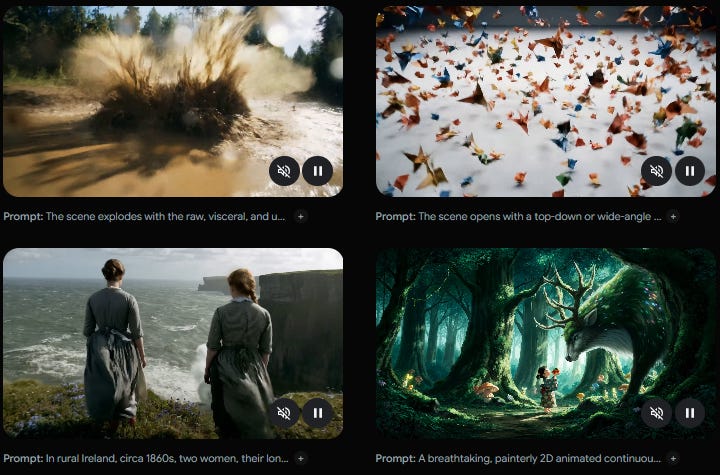

Google released a new text to video and audio model, called Veo 3. The videos (with sound) it can generate are pretty impressive. You can see some samples here.

Screen grab from: https://deepmind.google/models/veo/

New GenAI tool, cool! But how can it be used for language learning and teaching? In this post I offer some initial thoughts and sample videos I generated.

By the way, if you have a .edu email address then you can sign up for Google AI Pro for free (before June 30th, 2025), thereby getting access to Veo 3. I highly recommend educators and students to sign up as you will likely get access to better models as Google releases them over the next year. Just be sure to cancel your subscription after you sign up, or you will be charged in 2026.

The What & The How

Teachers and students can generate 8 second AI videos to learn vocabulary items and phrases.

Example 1: Word of the day: Sorpresa (surprise)

The video starts with text introducing the word: Palabra del día: Sorpresa (Word of the day: surprise). It ends with “sorpresa” and “Significa: algo inseperado” (surpise, it means: something unexpected). I love the design, including the colors, music, and sound. It is perfectly designed for the TikTok generation—short, dramatic, and just long enough to keep someone from scrolling for 8 more seconds. However, the word for unexpected is misspelled and should be inesperado. It turns out spelling words correctly is one thing this model frequently fails at.

I generated the video using the Gemini chatbot, screen shot below for the tab you need to click on.

I used ChatGPT 4o to create all the prompts of the videos in this post and then I copied the output into Gemini.

Example 2: Word of the day: Sorpresa, take two

After generating a few videos I quickly realized that the model doesn’t spell words well. So I decided to generate another word of the day video for the word sorpresa, but this time have someone pronounce the word and definition rather than displaying them as words on the screen.

I like the design of the first video more. The weird hands and arm at the end of this version is indeed a surprise. But the video works. I can simply have the characters on screen pronounce the word of the day rather than showing the text (although doing both would be best). This way I don’t have to worry about learning words with incorrect spelling.

Example 3: Word of the day: Rápido (fast)

Two issues: 1, the misspelling of rápido in the introduction, and 2, the boy’s head coming out of the race car’s tire. Although, to be honest, the boy’s head coming out of the tire might actually help students remember the word. His facial expression is one as if he were driving very fast and the wind was rushing against his face.

My guess is that the prompt asked for too many things in 8 seconds (such as a man running, a race car, a cheetah). I think that if I were to simplify the prompt I’d get a cleaner result (not random head appearing).

Example 4 & 5: You’re killing me, friend!

In the video above the woman says “You’re killing me, friend.” In the video below the woman says “Don’t play with my heart!” After watching these videos I don’t think I’ll forget these phrases. Me estás matando is going to be my go to phrase when I interact with my Spanish speaking colleagues.

As a language teacher I think it would be fun to assign students a short phrase, have them generate a video with Veo 3, and then have them introduce the phrase and what it means. I think students would find the good videos impressive and the bad ones humorous.

I’ve reached my video generation limit for the week, but I now have some ideas of how I can refine my prompts.

The Why

When our brain receives sensory information, it has to decide what to do with it. If it is worth focusing on, then the brain has to label and categorize that information. This process is called encoding. There are different types of encoding, including semantic, visual, and auditory. With these videos students get all of these modalities.

The self-reference effect suggests that when you learn something new you are more likely to remember it if you can find someway to relate it to you, your context, and your life experience. By using AI to generate videos depicting vocabulary and phrases, students and teachers can tailor the videos to the learners’ interests and lives, thereby making the language more memorable.

How would you use this GenAI tool in your classroom?

The Future

As language educators we shouldn’t only be experimenting with GenAI tools that exist now, we should be considering the trajectory of development of these tools and what is to come. This is the worst AI generated text to video & audio models will ever be.

When a future version of this model comes out it will perform better. Not only will it spell correctly, it will likely follow directions better. The model will come with better tools for stitching videos together, editing videos, and generating videos from an image the user uploads. In short, users will have more creative control and the model will become more reliable.

To me, the evidence suggests that language educators will play a different role in the near future. Instead of teaching language, we will be instructional designers and creative directors. Perhaps we will teach/show students HOW to learn and point out WHAT they need to learn, and then let them use AI models to teach them through personalized learning.

In a recent interview (which I recommend you to watch), Demis Hassabis (CEO of Google DeepMind) responds to the question of what students should study, given the future development of AI. Demis suggests that students [emphasis mine]:

“become a ninja in using the latest tools… but don’t neglect the basics too because you need the fundamentals and then… meta skills like learning to learn…

We know for sure there’s going to be a lot of change over the next 10 years…. what kind of skills are useful for that? Creativity skills, adaptability, resilience. I think all of these sort of, you know, meta skills is what will be important for the next generation.”

Sound byte starts at 52:28 and ends at 53:42.

I’ve said it before and I’ll say it again. Language educators ought to be discussing what the future of language education looks like as we get closer to Artificial General Intelligence.

Personalized Netflix with scaffolded language learning? AI generated VR language learning environments that react in real time? What will the future bring?